On his Surprisingly Free podcast last week, Jerry Brito had a great interview with Chicago law professor Joseph Isenbergh about the competition between open and closed systems. As we’ve seen, there’s been a lot of debate recently about the iPhone/Android competition and the implications for the perennial debate between open and closed technology platforms. In the past I’ve come down fairly decisively on the “open” side of the debate, criticizing Apple’s iPhone App Store and the decision to extend the iPhone’s closed architecture to the iPad.

In the year since I wrote those posts, a couple of things have happened that have caused some evolution in my views: I used a Linux-based desktop as my primary work machine for the first time in almost a decade, and I switched from an iPhone to a Droid X. These experiences have reminded me of an important fact: the user interfaces on “open” devices tend to be terrible.

The power of open systems comes from their flexibility and scalability. The TCP/IP protocol stack that powers the Internet allows a breathtaking variety of devices—XBoxen, web servers, iPhones, laptops and many others— to talk to each other seamlessly. When a new device comes along, it can be added to the Internet without modifying any of the existing infrastructure. And the TCP/IP protocols have “scaled” amazingly well: protocols designed to connect a handful of universities over 56 kbps links now connect billions of devices over multi-gigabit connections. TCP/IP is so scalable and flexible because its designers made as few assumptions as possible about what end-users would do with the network.

These characteristics—scalability and flexibility—are simply irrelevant in a user interface. Human beings are pretty much all the same, and their “specs” don’t really change over time. We produce and consume data at rates that are agonizingly slow by computer standards. And we’re creatures of habit; once we get used to doing things a certain way (typing on a QWERTY keyboard, say) it becomes extremely costly to retrain us to do it a different way. And so if you create an interface that works really well for one human user, it’s likely to work well for the vast majority of human users.

The hallmarks of a good user interface, then, are simplicity and consistency. Simplicity economizes on the user’s scarce and valuable attention; the fewer widgets on the screen, the more quickly the user can find the one she needs and move on to the next step. And consistency leverages the power of muscle memory: the QWERTY layout may have been arbitrary initially, but today it’s supported by massive human capital that dwarfs whatever efficiencies might be achieved by switching to another layout.

To put it bluntly, the open source development process is terrible at this. The decentralized nature of open source development means that there’s always a bias toward feature bloat. If two developers can’t decide on the right way to do something, the compromise is often to implement it both ways and leave the final decision to the user. This works well for server software; an Apache configuration file is long and hard to understand, but that’s OK because web servers mostly interact with other computers rather than people, so flexibility and scalability are more important than user-friendliness. But it tends to work terribly for end-user software, because compromise tends to translate into clutter and inconsistency.

In short, if you want to create a company that builds great user interfaces, you should organize it like Apple does: as a hierarchy with a single guy who makes all the important decisions. User interfaces are simple enough that a single guy can thoroughly understand them, so bottom-up organization isn’t really necessary. Indeed, a single talented designer with dictatorial power will almost always design a simpler and more consistent user interface than a bottom-up process driven by consensus.

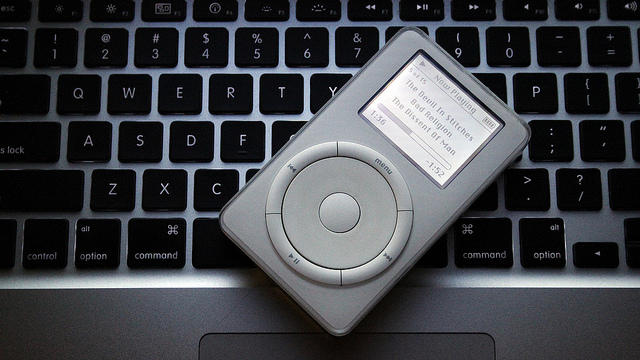

This strategy works best for products where the user interface is the most important feature. The iPod is a great example of this. From an engineering perspective, there was nothing particularly groundbreaking about the iPod, and indeed many geeks sneered at it when it came out. What the geeks missed was that a portable music player is an extremely personal device whose customers are interacting with it constantly. Getting the UI right is much more important than improving the technical specs of adding features.

By paring the interface down to 5 buttons and a scroll wheel, Apple enabled new customers to learn how to use it in a matter of seconds. The uncluttered, NeXT-derived column view made efficient use of the small screen and provided a consistent visual metaphor across the UI. And by coupling the iPod with its already-excellent iTunes software, Apple was able to offload many functions, like file deletion and playlist creation, onto the user’s PC. You can only achieve this kind of minimalist elegance if a single guy has ultimate authority for the design.

The iPhone also bears the hallmarks of Steve Jobs’s top-down design philosophy. It’s a much more complex device than the iPod, but Apple goes to extraordinary lengths to ensure that every iPhone app “feels” like it was designed by the same guy. They’ve created a visual language that allows experienced iPhone users to tell at a glance how a new application works. As just one example, dozens of applications use the “column view” metaphor popularized by the iPod. The iPhone lacks a hardware back button, but every application puts the software “back” button in exactly the same place on the screen. An iPhone user quickly develops “muscle memory” to automatically click that part of the screen to perform a “back” operation. The decision to use the upper-left-hand corner of the screen (rather than the lower-left, say) was much less important than getting every application to do it the same way.

In short, I don’t think it’s a coincidence that the devices with the most elegant UIs come from a company with a top-down, almost cult-like, corporate culture. In my next post I’ll talk about how Google’s corporate culture shapes its own products.

Update: Reader Gabe points to this excellent 2004 post by John Gruber on the subject of free software usability.

tim,

you tout the clean integration that the iphone has with itunes as a plus, but i would like to point out that i never have to sync my android phone with a desktop ever. it manages to do everything it needs to do without requiring a desktop computer at all. of course mr. jobs desires to keep it this way for a couple of reasons. it promotes apple computers and it aids in managing oppressive digital rights management.

i am curious what you dislike about your linux desktop. i use ubuntu at home and windows (xp) at work and i find the basic gnome desktop provided with ubuntu to be much more user friendly than xp. i like the places menu tied to the nautilus shortcuts to be an excellent way to manage my documents and navigate. the way to safely remove thumb drives is much simpler and more intuitive than the windows kludge. i have multiple desktops and my interface is extremely customizable. if you happen not to like gnome, there are several other options to try unlike the single microsoft interface or the single apple interface. in any case methinks ‘sucks’ is far too harsh a term.

in android land the various manufacturers are trying out tweaks on the ui to differentiate their offerings. the winners of this winnowing process will likely make their way into newer versions of android. mr. jobs insists on referring to this as fragmentation but it is equally valid and perhaps more accurate to label the iphone interface as ossified.

Actually I touted the integration of the *iPod* with iTunes as a plus. The iPod didn’t have a wireless connection, so it needed some way to transfer music from the computer, and the approach Apple came up with was way, way better than anything else on the market in the early part of the decade.

I’m actually ambivalent about the iPhone’s iTunes integration because as you say Android does a pretty good job of integrating with “the cloud.” However, one place where Android is clearly way behind is in music playing. The Android music and podcast playing apps are way, way behind the iPhone versions, which is why I’ve continued carrying my iPhone around for audio playing purposes.

On the Linux desktop: I found the Ubuntu/Gnome UI cluttered and missing key pieces of functionality. There tended to be a bunch of different ways to do the same thing, which in practice means wasted screen real estate and user confusion. The panel crashed several times during the summer I was using it. There didn’t seem to be any counterpart to Mac OS X’s Expose. The terminal app uses ctl-shift-c and ctl-shift-v for copying and pasting, while my browser used ctl-c and ctl-v, causing me to repeatedly hit the wrong thing and interrupt whatever I was doing. And so forth. I haven’t used a Windows box in years so I can’t really compare it but I found the Ubuntu user experience much inferior to the Mac experience.

Now, I realize that Gnome is highly customizable, and I have no doubt that if I put a lot of time into learning the relevant configuration files and downloading extras, I could get something roughly comparable to what Mac OS X does out of the box. Indeed, I had a couple of people tell me there was an Expose-type tool I could run. But this is part of my point: it’s a pain in the ass to have to extensively customize your UI before you can start using it, and the vast majority of users won’t do that. So while Ubuntu may work fine for power users, it’s not a good general-purpose solution for the 99% of the population that’s going to simply use the default UI.

Thank you for saying what I’ve been thinking for a while. As much as I want to believe in open source software (I have a dual-boot OS X/Ubuntu setup on my MacBook), I don’t ever see it being competitive with closed-source software for precisely the reasons you lay out. Open source seems to work best for back-end work (Not only Apache, but also development languages like Python, Ruby, etc.) and for programmers who are used to customizing everything (e.g., emacs, vim), but for front-end and general-use applications, most open source software feels like a pale imitation of the proprietary stuff. The few exceptions I can think of, like Firefox, are notable for doing things that, at least when they debuted, the proprietary versions weren’t doing — Internet Explorer was (and is) such a mountain of suck that having an alternative that not only avoided its flaws, but made genuine innovations of its own, was a godsend. But that sort of thing is rare, unfortunately.

I’ve been running Linux as my regular desktop OS since 1996, and when I briefly had a Mac OS X system for work, I almost threw the damn thing through the wall several times a day. Part of it is what your fingers are used to. (I almost never Control-C to copy, since my fingers know the old-school X “middle click to paste”) But what I’ve learned is _my_ Linux box, with _my_ chosen set of the nifty stuff turned off–which is completely different from how anyone else’s will end up.)

On the other hand, there’s an Apple application called “Garage Band” that I end up playing with every time I follow a certain Apple fangirl into an Apple store, and I don’t see any of the open source tools coming close to that.

The worst applications for UI, though, are the open-source imitations of either Microsoft Outlook or Apple ITunes. You can kind of see what the original was trying for and that this isn’t it.

One thing that might help with this is a general decoupling of application front- and back-end code. This is likely a nightmare at this point, because so many (all) of the GUI toolkits are utterly horrible, but if you managed to do it in the right way, it’d make swappable GUIs possible, which would allow multiple competing GUIs for a single application, most designed by single people or small teams. The best of these might go some way towards bridging the gap between open and closed source interfaces.

You and your readers might be interested in these two classic pieces on this topic:

http://web.archive.org/web/20030201183139/http://mpt.phrasewise.com/discuss/msgReader$173

http://daringfireball.net/2004/04/spray_on_usability

Besides the smartphone wars, the ongoing desktop browser wars will be a decent empirical test of Tim’s claim.

Firefox in the early aughts had the luxury of being the little engine that could against IE. Microsoft’s complacency let the Mozilla team catch up feature for feature. Wide-open development plus granular customization plus extensibility yielded innovation at the margins; it was a slow and relentless march towards a more modern user experience. Imagine going back to dilapidated web standards, window-based navigation where each link occludes the last, or a static top bar without any smart recall of your bookmarks and history. (I think Firefox still executes this idea best: often I’ll type a single letter into the awesome bar and it guesses my next move. Opposite of suck.)

Now that we’re seeing a much richer mix of bottom-up and top-down competition in the browser market, Firefox is behind again on user experience. Will its deliberative processes thrive or choke under pressure? Or to pose this as a longbet: will FF have lost its double-digit share on personal computers by November 2012?

Stay tuned.

As I see it there is a trade off. Both approaches have pluses and minus depending on what you use it for and need. For starters you have to have a lot more money to use a Mac whereas you can but together are powerful Ubuntu desktop for a few hundred bucks and Windows for another $100 or so. I haven’t used android yet but I use about everything else. I use Kubuntu as my primary work desktop as well as the virus free alternative for my 15 year old to watch youtube and facebook on. I also use an I pad for reading and network testing, and itouch for MP3’s and notes in the field, a macbook as my portable workstation and little eeepc with XP for everything else.

While there is a lot good about the Mac and I love all three of my machines I find their style to be horribly arrogant like the whole design is a reflection of Steve Jobs’ personality. The first thing I don’t like is the lack of software alternatives that the closed platform brings. You have to pay through the nose for just about everything whereas on Windows or Linux you have a ton of great open source software for just about every use. Yeah, yeah I know that there are alternative for Mac but you have to jump through more hoops to get things and make it actually work. Open source like open office for example on mac in my experience less often works right. This isn’t just true of software but of what you have to do to customize even the smallest interface tweaks that are just built into windows or KDE. I had to use complicated command lines just to get finder to display hidden files. There just isn’t much middle ground between novice and power user with mac. They assume that either you are an idiot who can’t do anything without breaking it so they have to hide things or a programmer who can understand the intricacies of xml formatting. It’s like know style and if your tastes deviate a little you are not worthy of using there machines unless maybe you know how to program as well. Funny enough I find gnome ,the most common Linux gui, to be annoying in the same way here that Mac OS is. They simply the interface and make it so that it’s harder to customize to you own tastes without breaking things.

Windows is less odious about this but then even windows 7 is still a malware magnet. Unless I spend about as much time locking down my living room computer as I lab workstations at work my kid gets it infested with trojans within a week. And I just don’t want to deal with that. It takes me and hour to set up a Kubuntu desktop for safe browsing and homework that a 15 year old can’t break. I suppose I could buy a mac but then I’d spend a lot more money as well. Kubuntu works great, it’s ecure and he loves all the interface tweaks he can do. I use it at work for essentially the same reasons. It doesn’t work so well on a laptop and Open Office can be crufty so I have the Mac stuff as well but given the choice on a desktop I’ll take Kubuntu.

I think this post comes a bit late. The renewed attention to UI and usability in the Free Software world started a few years ago. Many UI experts and students joined major projects and helped fix things. Distributions like Ubuntu are putting great emphasis on UI. At this point basically everybody is aware of this issue. So this is not time for rants or for considering bad UIs inherent to Free Software. It’s time to create great UIs!

A few years back Matthew Paul Thomas offered suggestions on improving Free Software interfaces and I wrote a long reply from the designers’ perspective. In short: design tasks are not much like coding, and an ecosystem that favors loose collaboration shorts the kind of ruthless aesthetic judgements necessary for good interfaces. (Which is your point too.)

But here’s what I find perennially amazing. When presented with the argument that closed systems produce superior interfaces, many programmers reflexively retreat to an attitude that “everyone is stupid but me [or, by proxy, us, meaning programmers].” To be fair, this attitude is usually presented in a positive sense such as “you too can learn Linux!” I offer as evidence cryptozoologist’s and Don Marti’s comments above. I hypothesize that this subtle arrogance works well for a group-contribution environment, where many ideas compete vigorously and the best rise to the top.

But designers (hell, most professionals) who have an attitude like this find themselves out of work pretty quickly. For all their prima donna behavior, good designers listen to their clients and their customers. They do this directly by literally listening to what people say they want, and indirectly through user testing and ethnographic observation. That doesn’t mean what people think they want is actually the right solution, but the first step is always listening/observing.

Regarding the iPhone “back” button placement it would be interesting to know how they got all apps to put it in the same place. Is there an Apple UI guideline which is accepted and followed by all independent app developers? If so, what’s the difference between presenting a guideline to an army of closed-source iPhone app developers and presenting a guideline to an army of eg. open-source Gnome app developers?

Regarding the shortcomings you experienced on your Linux Desktop, I think an important reason for that is that making a user-friendly, complete, “round” application requires quite a lot of work, esp. for the long polishing phase. And I think the various Linux Desktop communities simply do not have enough developer time available to do this polishing for a whole desktop plus applications. But I have no idea how to improve this :-/

Your example of Apple having a single “dictator” making all user interface decisions is incorrect. According to Jef Raskin (the creator of Apple’s Macintosh project), Steve Job’s has not designed a single product.

I’ve been using Macs since May, 1984, so I am very, very familiar with the Macintosh user interface as it has evolved over 26 years. I have been using Ubuntu Linux (currently 10.04 LTS) since spring 2009, and I have to say that over the past 18 months I have come to prefer the Ubuntu/Gnome desktop. There are several maddening things about the Mac OS X UI (notably, the ability to re-size a window only from the bottom right corner) that have never evolved. Good as the current Ubuntu UI is, if I don’t like the way something works, Linux provides me with the tools to make it work my way, as opposed to Apple’s more dictatorial approach.

As to bloat, most desktop versions of Linux will run comfortably in as little as 8 gb of disk space, and 1 gb of memory. Try running Mac OS X in that environment.

Android is still immature and is developing rapidly. Early versions of OS X were also pretty bad (as well as quite unstable). Android will continue to evolve more rapidly than iOS, and in the long run, Android is likely to eat Apple’s lunch.

@oliver – Apple has published Human Interface Guidelines (HIG) as part of the iOS/iPhone developer kit documentation. In addition, if you use the recommended APIs for navigation the “Back” button appears by default – the developer doesn’t have to do anything. Overall the iOS user interface is very well thought out and logical. In fact I expect to see its influence in future releases of OS/X.

The different between Apple providing guidelines and an army of open-source developers following guidelines is simple: Apple can reject applications that don’t follow its guidelines. Some Open Source projects do have strong gatekeepers (Linus and the Linux Kernel for example.) Most are glad to get any resources at all.

So while Ubuntu may work fine for power users, it’s not a good general-purpose solution for the 99% of the population that’s going to simply use the default UI.

Ah, the stereotypical dripping condescension for the teeming masses. When my septuagenarian parents bought netbooks, I set them up with stock Ubuntu, and they took to it immediately – and my mother even asked me to install it on her desktop machine next time I come to visit.

She’ll be so crushed when I tell her that the inventor of the World Wide Web said it’s just not a good general-purpose solution for her.

John, the fact that you “set them up” with Ubuntu is kind of the point, right? If you’d left them to their own devices I’m willing to bet they wouldn’t have chosen Ubuntu.

A point that I forgot to include. You wrote: In short, if you want to create a company that builds great user interfaces, you should organize it like Apple does: as a hierarchy with a single guy who makes all the important decisions.

This is a good description of Mark Shuttleworth’s role in Ubuntu, and may explain why Ubuntu is, far and away, the most popular desktop Linux distribution (and perhaps also why Shuttleworth attracts so much criticism from the Linux establishment).

Personally, I don’t like the current dark themes and leftward window button placements of Ubuntu 10.04 — but since it’s Linux, it didn’t require much effort or knowledge to change these attributes to my tastes.

You praise keyboards in your image caption, and in the article. But then you claim that open interfaces are terrible. Yet, I can scarcely think of a powerful yet open text based interface. Powershell, Matlab, Mathematica, and Maple come to mind. But they’re vastly outnumbered by fantastic open text based interfaces (bash, zsh, python, R, ruby, awk, imagemagick, etc).

So when you say “These characteristics—scalability and flexibility—are simply irrelevant in a user interface.” I have to disagree. Using a mouse or pointer driven interface slows us down so scalability and flexibility don’t seem to matter in the same way that colour doesn’t matter to someone who is blind.

When you say, “Human beings are pretty much all the same, and their “specs” don’t really change over time. We produce and consume data at rates that are agonizingly slow by computer standards.” I wonder why you haven’t been begging to push your Applications to support the ability to compose inputs and outputs so you can speed yourself up.

So you claim scalability and flexibility aren’t important because you can’t input much data. Then you praise Apple’s design decisions since they don’t let you handle much data. This seems backwards since I can generate a lot of data very quickly and there’s no mouse driven interface which will carve a path for me to do exactly what I want with my data. I require scalability and flexibility and mouse driven interfaces don’t support it. So I would say Apple’s applications are very poor for my use case.

Classic example of this is Drupal. Powerful web CMS, completely open source, impossible interface to understand.

Interesting read, thanks. Putting a gifted guy in the driver’s seat for making crucial decision is a way to go for any kind of business.

iPod, iPad and iTunes are not the examples of getting UI right. They are horrific. I would chose simple folder structure over what Jobs created because it works in an abysmal fashion if you want to have artists and compilations at the same level. Not to mention the retarded concept of “syncing” your player. iPhone screen gets cluttered in a snap, and its options are even more confusing with some programs adding themselves to the settings menu and some having option internally (and some both).

Apfel-universe is a perfect example of US-style totalitarian vertical integration with a big added dose of brainwashing.

@Timothy B Lee

Ubuntu does have an expose like tool working out of the box, just press super+w.

Interesting that many of the recommendations for fixing the open source usability problem focus on the lack of designers who contribute to projects, how to create incentives, etc. But in the case of the Drupal UX project where a couple of designers did volunteer to improve the usability of the software, there was a backlash and strong resistance from some parts of the Drupal developer community. This is because many programmers participate in open source projects because it’s an opportunity to write software without interference from people who have different priorities than good software development practices. At work, you have to make engineering compromises because of the influence of business people, marketing, sales, and yes, user experience designers. In your free time, you can participate in an open source project that’s essentially closed to non-developers because of the “scratch your own itch” rule.

Linux “wizards” would do well to understand OS X before knocking it down. It’s bog simple to expose hidden files in Finder; compilers are mostly free; there’s an abundance of open source software including R and Sage available. The desktop is very good, productivity software is excellent, the terminal window with perl awk and sed is a click away … the hardware is far above industry average in reliability …. Native apps like iCal and iPhoto are pretty good …. And the price premium is moderate, if you use your machine for 2 yrs, it is irrelevant. OS X gives me all the benefits of Linux apps, on a better desktop, with better integrated and more reliable hardware —- for a modest premium (in terms of my costs and revenues).

I agree that Apple products have the best UI.

I disagree that it exclusively a result of their closed-source, top-down development. If true, Windows should have an equally agreeable user experience but it does not.

A large proponent of Apple’s UI success is a result of tightly coupling the software and hardware, a claim that Windows nor a linux-based OS can make. I remember Steve Jobs saying so himself.

Being a daily user of a variety of Linux and Unix systems, I tend to believe I am in a position to judge the merits of open user interfaces in an informed manner.

Having used the Solaris and HP/UX proprietary user interfaces extensively for decades, I can tell you that these did not outperform the best open user interfaces available with Linux releases of their time. They rather were on par with them. This, plus because system RAM and disk memory was rather expensive for Sun and HP systems, meaning Linux systems more typically had more memory, led to a performance advantage for Linux. More memory meant less memory swapping, which led to fewer system crashes for Linux.

Apple’s OSX UI rides on a fine Unix, and is nice. “Official” SCO Unix had a terrible user interface — it typically was intended to boot up directly into a cash register program so only the cash register program was tested — and even then it was only tested minimally for each customer’s unique requirements.

I use RHEL Linux implementations from 3.0 to 5.0 frequently and these are superior to Solaris and HP/UX. As servers, they are on par with each other — except that RHEL servers ran faster on a dollar-for-dollar basis.

Quality is really all about the level of testing a product receives. Sun Solaris was a nice product, while OpenSolaris (gone now) which was essentially Sun Solaris but used the open Gnome desktop was quite disappointing. Yet, the Gnome desktop proved itself to be a fine and reliable UI with both RHEL and Debian Linux. The OpenSolaris desktop was simply incomplete as a development project.

While some “open” Linux implementations were and are flawed, others are more completely tested.

So I am left feeling that your statements regarding open UI’s is more flawed than most of the worst open UI’s. The more advanced open UI’s have remarkable, head-turning features that have become icons of how a powerful computer should be expected to perform.

Personally I find the OSX UI obstructive and clumsy (albeit pretty for the most part). Coming from a PC background (Wintel/Gnome) I’ve been using a Mac for the past few months to build websites and the window management is horrible, not to mention a lack of expected keyboard shortcuts, over-reliance on the mouse and unnumerable little niggles (e.g. Finder not retaining state between dialogues). I expected to ‘get it’, but my enduring experience has been one of frustration and anger as the OS seems to do its best to make decisions for me (which most of the time I don’t agree with), thus drastically reducing productivity. If it were a case of getting used to it I would give it time, but after googling numerous problems I’ve been having I’ve come to the conclusion that OSX is simply lacking in a lot of little UI tweaks and features which I personally have come to depend upon (example: you can’t even change the OS’s default font size. That is *bonkers*).